Udine, August 20th 2019

The testing is one of the most important activities when developing an application, or in the case of SupplHi, a complex SaaS Vendor Management platform. It is very difficult to transmit the value of a good test system because the result is often intangible: everyone understands that there is a certain increase in quality, but the benefit is hard to compare with the necessary costs to realize it.

Furthermore, the Lean methodology adopted by SupplHi for the development of its own platform, with frequent releases can push the sacrificing of tests for calendar needs, with a result being not testing the entire application more but only the new modules in each release. This is the typical scenario that causes the so-called “regressions”, or anomalies in the function of the application components not the object of a release but which for some reason have stopped functioning correctly.

In SupplHi we have limited these kinds of problems with two synergistic approaches with many differences between them: decoupling of the components and automatic testing.

To reach the results held in the terms of Software Quality Assurance – also in accord with ISO/IEC 27001:2013 obtained July 2019 – SupplHi’s Tech Team has cultured the challenge, identifying and resolving problems, choosing the most adaptable tools and adopting an innovative approach.

THE DECOUPLING OF COMPONENTS ADOPTED BY SUPPLHI

The decoupling of components is very important because it reduces the possibility that changes to one component will affect other parts.

Every application of the SupplHi platform is developed with a “three-tier-architecture” that a clear division through the three layers “Presentation”, “Business Logic” and “Data access”.

The parts of the Presentation (Frontend) are realized in Angular 7, a framework created by Google that out-of-the-box expects a MVVC pattern (Model View View Controller) and thanks to a component oriented approach and the presence of the IoC pattern (Inversion of Control) allows the realization of very robust, flexible and extremely decoupled applications. The Frontend communicates with the other layers through the call to special APIs (Application Programming Interface).

The Backend (which encapsulates the Business Logic and Data Access layers) is responsible for retrieving data from the Database, processing it and supplying it in a format that can be interpreted by the frontend and is created with a microservice architecture created with Spring Boot, the de facto standard of the Java Enterprise web development framework. This framework is probably the most advanced available on the market today and provides developers with many tools to implement the best architectural patterns characteristic of a good modern web application (MVC, IoC, Dao based on ORM, Factory, Proxy, …).

For SupplHi, data quality is one its principle assets, thus it was natural to begin implementation of tests on the Backend, as it is responsible for data recovery and processing, to verify responses generated by the API.

The tests can generally be classified within the following framework:

- Unit Tests that test the Business Logic of a method;

- Integration Tests that test how multiple components of the application interact and includes different methods that can be found in one or more classes

- End-to-end Tests that simulate the use of the application by a user in a simulated environment.

Given the high level of decoupling attained, the methods that make up our APIs often have such simple logic that they make the Unit Tests superfluous. We have therefore limited this type of test to methods rich in business logic or with particularly complex algorithms.

THE INTEGRATION TESTS ADOPTED BY SUPPLHI

Regarding Integration Tests, problems are much more diffused, in fact, there are several hundred APIs exposed to the frontend; the probability that a modification, however punctual, will affect many APIs therefore is very high.

The basic idea behind our Integration Test platform is to connect all APIs with parameters set in Input and compare the response with a consolidated result. This approach allows us to eventually intercept APIs that, following an intervention, no longer return the expected result and that consequently cause problems to the frontend.

We therefore had to incorporate tests that simulated a real frontend call and compared the results with a result that we had already verified as correct.

The problems that we found in front of us therefore were:

- How to make a call to the backend from a backend test?

- How to authenticate a token request for all of our calls?

- How to validate a response from an API?

- How to provide our code with data that could be modified, but restored to a new execution of the test?

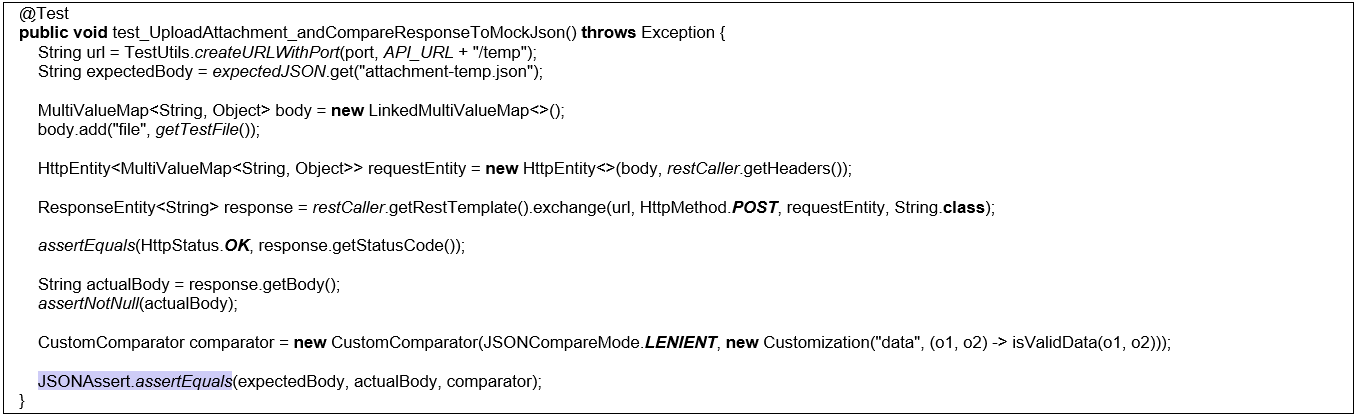

For the first question, we found a simple response. Springs provides the TestRestTemplate class which is just an alternative to the RestTemplate class specific to the Integration Tests. With TestRestTemplate it is possible to make HTTP calls through the exchange method that requires as parameters a URL to call, a HTTP method (GET, POST…), a HTTP request and a type (String, Long…) from the response. All that we had to do then was to correctly define the HTTP request by correctly building the request’s body and header.

When defining the header we ran into our second problem: we needed to authenticate ourselves, retrieve a token and use it for subsequent calls. The authentication is in turn a HTTP call with certain login parameters passed through the header, once we found the right configuration of parameters, we had the token to use in all subsequent calls. To do this we noted the method that recovers the token with the annotation “@BeforeClass” that permits the execution of a method before performing all tests contained within the same class.

Coming to the third problem, we needed a way to validate the result of a call; our API responses are typically from complex objects that can be represented in a JSON structure. We then associated to each API with a reference response saved under the JSON file form in the application resources. Within the same method noted with “@BeforeClass” used before to retrieve the token, we entered the code to read the JSON files and save them as a String.

How to compare these Strings with the call responses? Directly comparing the two strings was absolutely not a practical strategy. Besides any eventual differences in the format of the two JSONs, the content could have updated dates for each call. The first solution was to transform the two JSONs into objects (using Jackson’s libraries) and compare their most important properties, but this required a lot of time and a lot of code.

We found a second, more practical solution in the JSONAssert library that is specifically for comparing two JSONs and allows defining certain custom comparators for specific comparison logics.

The last problem was related to the restoration of the database with a reference before the initialization of the test. The choice was on Flyway library that allows you to update a database by executing some update scripts when the application starts.

The SQL files saved in a specific directory were considered Patches to the DB and, named with particular syntax, are applied by Flyway in the right order. At each execution, Flyway saves the information of the executed files on a DB table, including the checksum of the file in order to re-execute the patches in the subsequent execution.

In particular, for our case, the files executed by Flyway had to have the suffix R_ which is interpreted as a repeatable script. This suffix was not sufficient, because if the checksum saved in db corresponded to the script’s checksum, Flyway rightly not noticing a modification would not execute the script again. We therefore needed a way to force the execution at the beginning of all tests.

Also in this case, Spring gave us the tools to solve our problem. We defined a test class with a single test annotated with @Sql annotation, the annotation allowed us to run a SQL script. Inside this script we wrote a simple query to clear the checksum on the Flyway table and force the execution of the scripts to update the db.

THE VERIFICATION TOOLS USED BY SUPPLHI

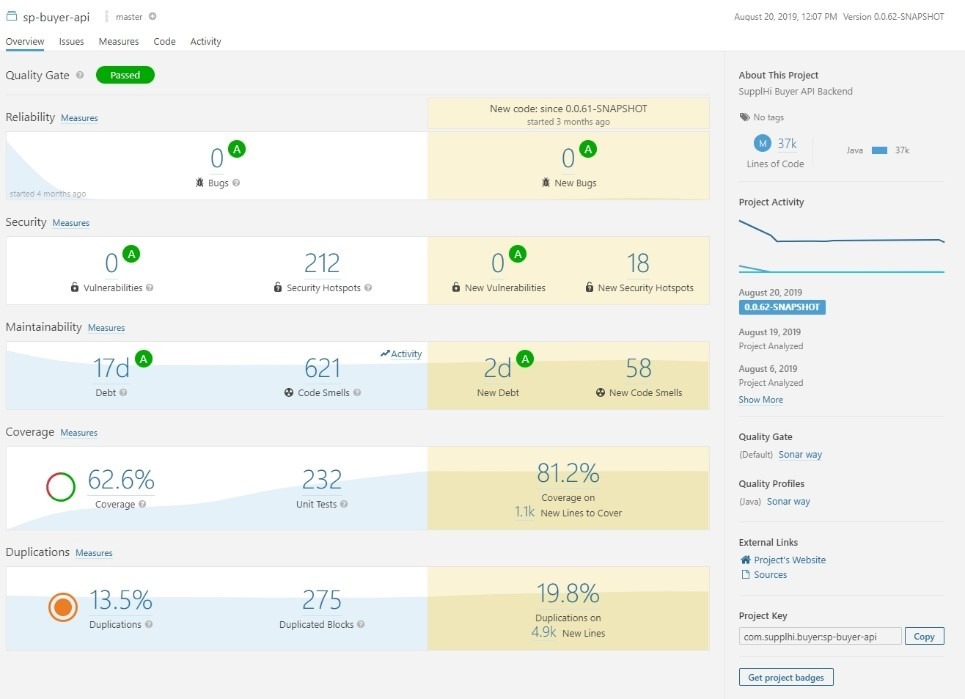

To conclude, in this article we have seen that the tools used by SupplHi to develop the Integration Tests in a scientific way, significantly reducing the probability of having regressions. For each endpoint of our backend we developed one or more automated tests that verify all the cases expected by our code. Using tools for the management of software quality metrics like SonarQube and JaCoCo we can therefore monitor the quality parameters and the percentage of coverage of the code by our tests, making the effects of the tests developed measurable.

SonarQube report of a SupplHi application

SonarQube report of a SupplHi application

The next challenge consists of going further, eventually automating even the End-to-end Tests through tools such as Selenium or Cypress that allow us to register user’s actions and re-run them automatically.

Luca Riccitelli, Software Developer, SupplHi

Gabriele Muscas, CTO, SupplHi

Interesting articles for further information on the Unit Tests:

https://www.baeldung.com/spring-boot-testing

https://www.mattianatali.it/come-sviluppare-rest-api-in-tdd-con-spring-boot/